Livy session errors in Azure Synapse with Notebook

If you are working with Notebook in Azure Synapse Analytics and multiple people are using the same Spark cluster at the same time then chances are high that you have seen any of these below errors:

"Livy Session has failed.

Session state: error code: AVAILBLE_WORKSPACE_CAPACITY_EXCEEDED.You job

requested 24 vcores . However, the workspace only has 2 vcores availble out of

quota of 50 vcores for node size family [MemoryOptmized]."

"Failed to create Livy session for executing notebook. Error: Your pool's capacity (3200 vcores) exceeds your workspace's total vcore quota (50 vcores). Try reducing the pool capacity or increasing your workspace's vcore quota. HTTP status code: 400."

"InvalidHttpRequestToLivy: Your Spark job requested 56 vcores. However, the workspace has a 50 core limit. Try reducing the numbers of vcores requested or increasing your vcore quota. Quota can be increased using Azure Support request"

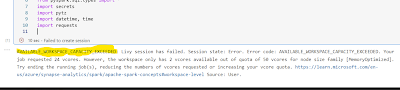

The below figure:1 shows one of the error while running the Synapse notebook.

Fig 1: Error Available workspace exceed

What is Livy?

Your initial thought will likely be, "What exactly is this Livy session?"!

Apache Livy is a service that enables easy interaction with a Spark

cluster over a REST interface [1]. Whenever we execute a notebook from Azure Synapse Analytics Livy helps interact with Spark engine.

Will it fix if you increase vCores?

By looking at the error message you may attempt to increase the vCores; however, increasing the vCores will not solve your problem.

Let's look into how Spark pool works, by definition of Spark pool; when Spark pool instantiated it's create a Spark instance that process the data. Spark instances are created when you connect to a Spark pool, create a session and run the job. And multiple users may have access to a single Spark pool. When multiple users are running a job at the same time then it may happen that the first job already used most vCores so the another job executed by other user will find the Livy session error .

How to solve it?

The problem occurs when multiple people work on same Spark pool or same user running more than one Synapse notebook in parallel. When multiple Data Engineer works on same Spark pool in Synapse they can configure the session and save into their DevOps branch. Let's look into it step by step:

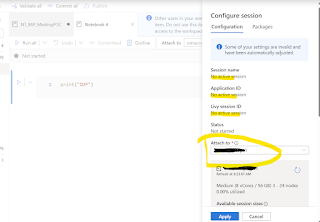

1. You will find configuration button (a gear icon) as below fig 1 shown:

Fig 2: configuration button

By clicking on the configuration button you will find details about Spark pool configuration details as shown in the below diagram:

Fig 3: Session details

And this is your session, as you find in the above Fig 3. And there is no active session.

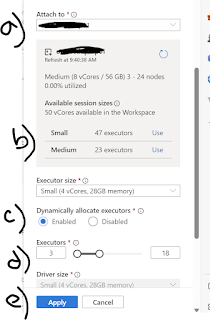

2. Activate your session by attaching the Spark pool and assigning right resources. Please find the below fig 4 and details steps to avoid the Livy session error.

Fig 4: Fine-tune the Settings

a) Attach the Spark pool from available pools (if you have more than one). I got only one Spark pool.

b) Select session size, I have chosen small by clicking the 'Use' button

c) Enable Dynamically allocate executor, this will help Spark engine to allocate the executor dynamically.

d) You can change Executors, e.g. by default small session size have 47 executors however; I have chosen 3 to 18 executors to free up the rest for other users. Sometimes you may need to get down to 1 to 3 executors to avoid the errors.

e) And finally apply the changes to your session.

And you can commit this changes to your DevOps branch for the particular notebook you are working on so that you don't need to apply the same settings again.

In addition, since the maximum Synapse workspace's total vCores is 50, you can create

request to Microsoft to increase the vCores for your organization.

In summary, the Livy session error is a common error when multiple data engineers working in the same Spark pool. So it's important to understand how you can have your session setup correctly so that more than one session can be executed at the same time.